Optical Character Recognition in Scanned PDFs

The ability to physically scan documents to a computer and store them in an electronic format is amazing. No longer do we have to keep reams of paperwork in filing cabinets for years after they were produced.

However, scanning documents does come with drawbacks:

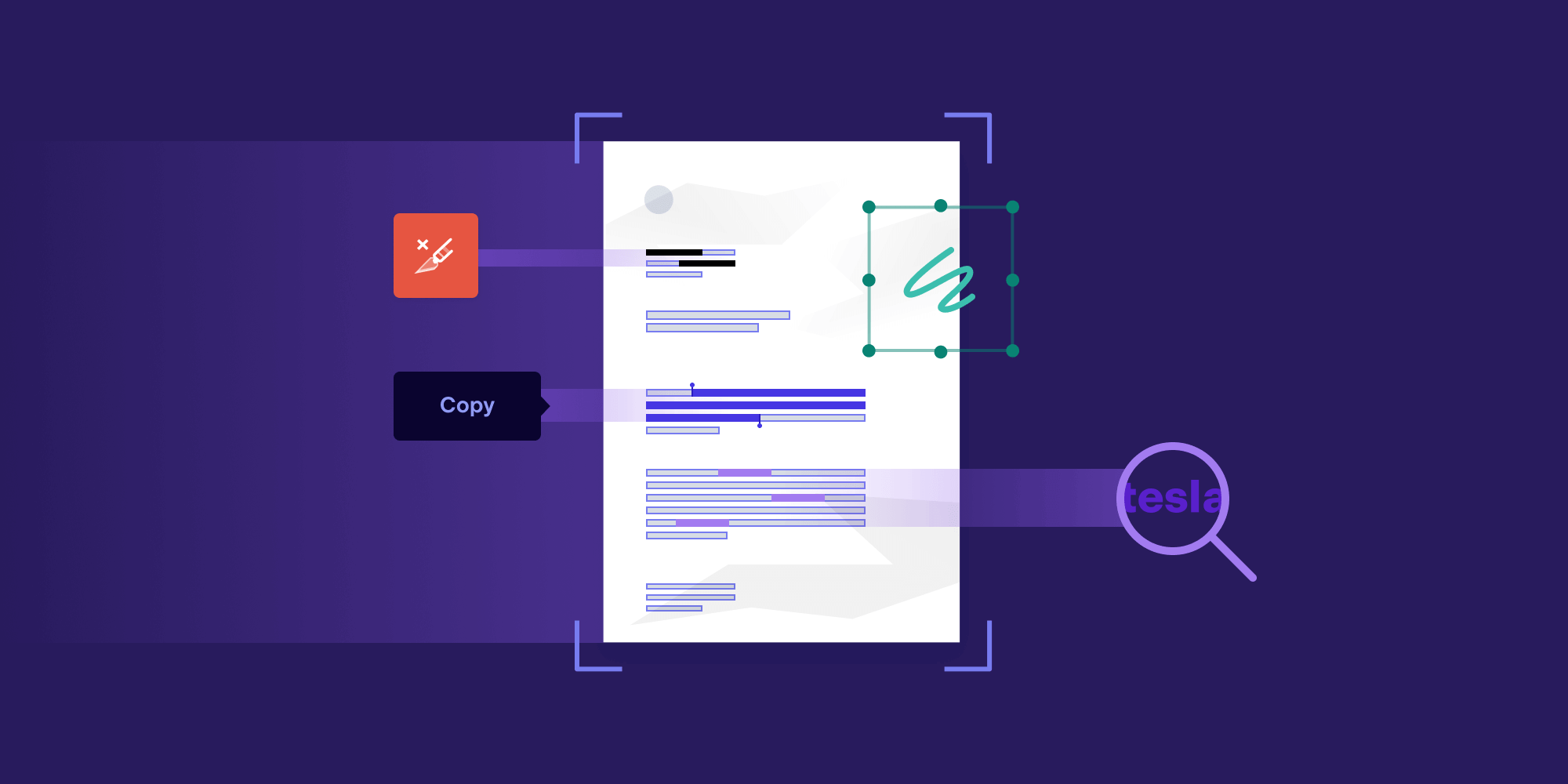

-

There’s no ability to search text.

-

There isn’t a way to highlight text, underline text, etc.

-

Users are unable to copy and paste from documents.

-

It’s hard to redact information quickly and efficiently.

This is where Optical Character Recognition (OCR) comes in.

What Is OCR?

To define OCR, let’s look at the definition from Wikipedia:

Optical character recognition or optical character reader (OCR) is the electronic or mechanical conversion of images of typed, handwritten or printed text into machine-encoded text, whether from a scanned document, a photo of a document, a scene-photo (for example the text on signs and billboards in a landscape photo) or from subtitle text superimposed on an image (for example: from a television broadcast).

Essentially, OCR is a process our brain performs every day when we read. OCR can also be performed by machines to interpret text from an image in order to enhance its functionality.

But why is this important?

As mentioned in the introduction, more and more analog material is being digitized, and usually in an image-like format. These image formats hold no information about the text contained within the image, meaning a layer of information is missing.

By using OCR technology, you can create a digitized textual layer of information, which allows users to perform text-based software operations.

Why Use OCR on a PDF?

Because of the flexibility of the PDF format, it’s long been a go-to standard for the digitization of various sources. For example, when you scan a document, you often have the option to save it as a PDF. Sometimes it’s even the default.

When a document is scanned, you’re essentially taking a picture. Scanning software then embeds the image directly into a PDF for further use.

The issue with this is that PDFs have no textual information, which means you’ll miss out on a variety of PDF features that enhance the way data can later be analyzed.

OCR in PDF Use Cases

To give an example of how OCR might come into play with PDFs, I’ve written up a few potential use cases.

Searching an Archived Document

Scanning documents to save them in an electronic format is a great way of filing away data.

But, as a hypothetical example, what happens when you’re trying to look for all documents relating to your Tesla car purchase? Well, you could open every document on your device and look through to find the related content. Or you could use a search tool to scan through every document for the word “Tesla.”

If a document is scanned, there’s no way to search for the word “Tesla” because the PDF only holds an image representation of the text and not any textual content. But if you perform OCR on your documents, you can create a text layer for searching at a later date. This would allow you to search for the word “Tesla” and find all the relevant documents in no time.

Marking Up a Document

Another useful feature of PDFs is the ability to mark up documents with annotations. With many readers, you can simply highlight, underline, add notes to text, and more. Again, with a scanned PDF, you have no textual information to mark up, meaning you have no ability to use the features of a PDF reader, thereby making the content less interactive.

However, with a document where OCR has been performed, there’s text to work with, and you can then annotate it accordingly.

Redacting Sensitive Information

Going back to our Tesla document example, imagine now you want to hide the make of car you bought before you forward the relevant documents to someone else. With a simple scanned image, this would make for a laborious task of scrolling through each document and creating redaction squares to cover the word “Tesla.”

If OCR has already been applied, you’ll have a text content layer that can be searched, and you can then extract the area from that search to easily create the redaction for all the instances found.

With a quick and easy command, it’s possible to redact all mentions of “Tesla,” thereby saving huge amounts of time.

Copying and Pasting Ability

What if you open a document and find an amazing quotable sentence, only to discover the document is just a scanned image? Well, no one wants to type the whole sentence out, do they?

With OCR applied to the document, you can highlight and copy from the document with any quality PDF reader, again, saving yourself the time and eliminating the margin of error that comes from rewriting it yourself.

How Does OCR Work?

Now that we’ve covered why you might need to use OCR, you may be wondering how it works. A machine is not equal to a human brain (for now at least), and you can’t hand a computer an image and say “read this.” Instead, algorithms have to be created to teach the computer how to read textual information from an image.

There are various ways of implementing OCR algorithms, but here we’ll describe the steps PSPDFKit takes to perform OCR.

Identifying Areas of Text

The first step is recognizing where text exists in an image. When humans look at an image, we’re able to see vibrant colors and detect edges of letters — even when the contrast is low or if there is a noise source like uneven light. For software to do the same, many filters and algorithms are used to “clean up” an image to help a computer better recognize text.

Various image manipulation techniques such as binarization, skew correction, and noise reduction filters are used to enhance the original image and reveal text in the document with the greatest contrast to allow the following steps to run with the highest quality.

With a clean image to work with, it’s much easier to detect possible text on a page and mark it for processing.

Reading the Text Lines

Once lines of text are found in the image, further analysis of these blocks determines the characters making up the text.

Recognizing characters is hard because they come in all shapes and sizes. Imagine how many fonts are out there, and then imagine how many ways a human can write a single character. The variations are near endless.

Using heuristics to predict each character is possible, but it’s also prone to errors. Instead, PSPDFKit uses Machine Learning (ML), which has the advantage of being able to analyze great quantities of data in order to make best guesses, much like humans do.

ML has the ability to look over a set of examples provided and make estimates in the future if they are similar to the examples seen before. The ability of ML to analyze large amounts of data allows support for many combinations of text formats. Even when the exact combination is not accounted for in the original dataset, it’s possible to use the model to make a best guess of what is being represented. Again, this works similarly to how humans estimate.

Embedding Textual Information Back into a PDF

Now that we know what characters are written and the area they’re located in, it’s time to embed them back into the PDF. In the PDF specification, there’s a concept of the content of a page, which instructs PDF readers how and what text to draw.

But in the case of OCR, there’s no need to render the text. The image representing the text is already part of the PDF, and the reader should not write over it. Because the PDF specification is so vast, it allows for invisible text to be placed in the content of a page so that rendered text doesn’t impair the view of the image behind the text.

The work doesn’t stop at making the text invisible though; the correct character needs to be represented on the correct area of the page. Normally this is determined by the font used, with scaling dimensions applied to the font glyphs. The question is, “What font are we using?”

With OCR, a fake (or dummy) font is used to represent the characters present in the document at the scale and position required. Therefore, in the PDF, a special font that can represent any character is embedded so any reader can access the textual information PSPDFKit created!

Conclusion

From this blog post, you should now know how powerful OCR can make your simple scanned document. You should also have a high-level understanding of how OCR is performed. Obviously, there are many more nitty-gritty details to achieving good OCR results, but you can leave those parts up to us.

If you’re interested in trying out OCR on iOS, Android, Web, .NET, or Java, then head over to our trial page where you can try any of our products out for free.

When Nick started tinkering with guitar effects pedals, he didn’t realize it’d take him all the way to a career in software. He has worked on products that communicate with space, blast Metallica to packed stadiums, and enable millions to use documents through PSPDFKit, but in his personal life, he enjoys the simplicity of running in the mountains.