Summarize a PDF Document Using Machine Learning and Natural Language Processing

When it comes to retrieving and storing information from the internet, we have an extensive choice of tools to help us collect and compile it. The list ranges from the simplest things, like browser bookmarks and physical notes, to more complicated software such as mind maps and workspaces for personal knowledge management that use databases and Markdown pages.

We’re exposed to a constant flow of news, information, and messages from different sources, so it’s becoming more and more important to be able to quickly and efficiently organize and distill what’s useful for us.

The Power of Conciseness

Being able to condense a topic into a few pages (or lines) is considered a superpower for people who want to organize valuable information in a short amount of time. And up until a few years ago, the process of producing a summary from a given text was considered a task that could only be successfully completed by humans, and not by computers.

However, something like this is now possible thanks to things like machine learning, natural language processing, and more. The next sections will provide a brief overview of these technologies before delving into how they can help us.

ℹ️ Note: Rather than reinvent the wheel, these sections will include definitions compiled from other websites, as they do a better job than we can of describing these technologies.

Machine Learning at Your Service

Machine learning (ML) is a field that’s considered part of artificial intelligence (AI). According to Wikipedia, “Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.”

Natural Language Processing, the Technology You Didn’t Know

Natural language processing is another subfield of AI that, according to Wikipedia, is “concerned with the interactions between computers and human language, in particular how to program computers to process and analyze large amounts of natural language data.”

Natural language processing dates back to the 1950s, and some great minds, like Alan Turing, worked on automated interpretation and generation of natural language.

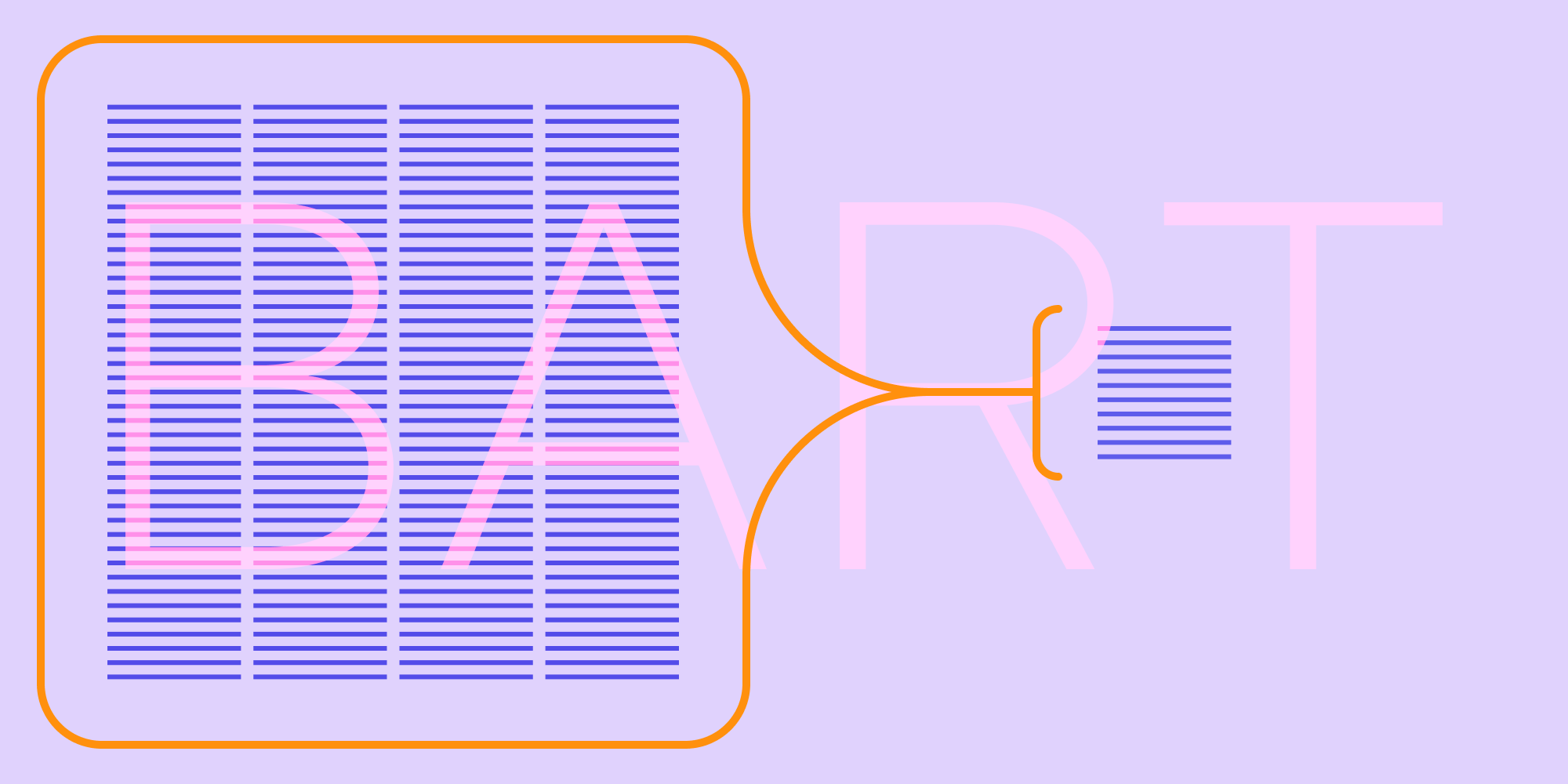

BART, the Model for Text Comprehension Tasks

No, not that Bart! The BART model was proposed for the first time on 29 October 2019 in the research paper entitled BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension by Mike Lewis, Yinhan Liu, Naman Goyal, Marjan Ghazvininejad, Abdelrahman Mohamed, Omer Levy, Ves Stoyanov, and Luke Zettlemoyer.

According to Hugging Face, “BART uses a standard seq2seq/machine translation architecture with a bidirectional encoder, like BERT, and a left-to-right decoder, like GPT.”

So what does that mean? Well, the paper’s abstract states that, “BART is particularly effective when fine tuned for text generation but also works well for comprehension tasks. It matches the performance of RoBERTa with comparable training resources on GLUE and SQuAD, achieves new state-of-the-art results on a range of abstractive dialogue, question answering, and summarization tasks, with gains of up to 6 ROUGE.”

Summarizing a PDF Document the Quickest Possible Way

OK enough with the jargon and boring details. Data extraction from PDF documents is already a tricky topic for most. So what we want to know is: How hard would it be to generate a summary of a PDF document without too much hassle?

The answer is: It can be done using fewer than 20 lines of code in Python! Using NLP for ML and the BART model, we can easily achieve the task of summarizing a PDF document written in English.

Show Me the Code!

As a first step, we need to extract the text we want to process from a PDF document. For this task, we can use the Python library pdfplumber.

With a document entitled document.pdf, we can extract the text using this code:

import pdfplumber with pdfplumber.open(r'document.pdf') as pdf: extracted_page = pdf.pages[1] extracted_text = extracted_page.extract_text() print(extracted_text)

Next, we can use the transformers library offered by Hugging Face and the BART tokenizer with a distilled BART model specifically trained for text summarization. The code below extracts the text and assigns it to the extracted_text variable:

from transformers import BartTokenizer, BartForConditionalGeneration, BartConfig model = BartForConditionalGeneration.from_pretrained('sshleifer/distilbart-cnn-12-6') tokenizer = BartTokenizer.from_pretrained('sshleifer/distilbart-cnn-12-6') inputs = tokenizer([extracted_text], truncation=True, return_tensors='pt') # Generate Summary summary_ids = model.generate(inputs['input_ids'], num_beams=4, early_stopping=True, min_length=0, max_length=1024) summarized_text = ([tokenizer.decode(g, skip_special_tokens=True, clean_up_tokenization_spaces=True) for g in summary_ids]) print(summarized_text[0])

And voilà, the code will print a summarized PDF document! 😎

The full implementation can be found in this Colab file. Google Colab is a free Jupyter notebook environment that runs entirely in the cloud. Executing all the steps starting from the beginning will give you the possibility of loading a document and checking the actual output in real time.

Conclusion

The goal of this blog post was to introduce you to machine learning and natural language processing applied to PDF documents, showing the quickest possible way to summarize a text. This task requires a wide skillset, and depending on the type of language involved (e.g. something scientific, academic, or conversational), the type of model you need will vary.

If you feel you can do it in fewer than 20 lines of code, contact me on Twitter and I’ll be happy to look at your solution. 😀