Dynamic Linking in WebAssembly

If you’ve been using WebAssembly for a while, you’ll be familiar with compiling all your working code into one binary for distribution. In “normal” binary distribution, such as when using executable targets for Unix systems, this concept is equal to statically linking your code so it can work on its own with no need for external dependencies.

When a project starts to grow larger and more distributed, it may be time to start looking for ways to reduce binary size to speed up download and instantiation times of the WebAssembly binary. If you’re using the Emscripten compiler, the simple option is to first look into the various optimization avenues provided. But if you’ve truly exhausted all those avenues, you can look into the topic of this post: dynamic linking.

Static vs. Dynamic Linking for WebAssembly

💡 Tip: For a more in-depth discussion of static linking versus dynamic linking, check out Andrew Birnberg’s post on Medium.

Statically linking is great for small projects, but for larger projects, or for projects that share code with other large projects, it’s pretty wasteful. Why build and distribute code multiple times? This is the whole reason why dynamic linking is possible and why it’s the default for most distributed applications across many operating systems. Dynamic linking means that all the symbols are resolved at compile time, but they aren’t placed in the binary for distribution. Instead, linking occurs at runtime, and suitable libraries are loaded to complete the missing symbols.

For WebAssembly, dynamic linking isn’t very logical because the runtime environment contains no commonly linked libraries — not to mention the tooling for such features was both experimental until not long ago and underutilized in the community.

Recently, however, there’s been a push to improve dynamic linking solutions and even support a programmatic interface to the runtime dynamic linker (dlopen). This opens up the possibility of reducing the distribution size of binaries and reducing load times at startup by only distributing and instantiating what you need, when you need it.

Static Linking — A Single WebAssembly Binary

First, let’s look at the common method of linking a WebAssembly target.

As mentioned in the introduction, it’s usually wise to statically link a full project and resolve all symbols during compilation. Resolution applies for all symbols, no matter if we’re talking about custom code or the standard library.

With Emscripten, static linking is the default option; there’s no need to do anything special. Just compile your source and link it all together. And because Emscripten handles some common libraries, like the standard library, some burden is taken off the developer:

emcc a.cpp -c -o a.o emcc b.cpp -c -o b.o emcc a.o b.o -o project.js

In addition to the common libraries linked, there are also options for some commonly used libraries under the Emscripten Ports project. These can be linked by referencing them on the command line:

emcc a.o b.o -s USE_SDL=2 -o project.js

The process of creating a WebAssembly binary is all fairly straightforward, but there are drawbacks. One of those drawbacks is the size of the binary. Because we’re linking everything in one binary, large projects can be substantial in binary size, and any extra megabyte in binary size can cause many extra seconds of delay in download times on slow connections.

💡 Tip: If you find your binaries are bloated, make sure you try all the optimization tips listed in the Emscripten guides.

So how do you reduce the original binary size overhead? That all depends on your project requirements and limitations.

Side Modules — Dynamic Linking Multiple WebAssembly Binaries

If you’re distributing multiple binaries with shared symbols, your first option is to explore dynamic linking. Alon Zakai, the maintainer of Emscripten has been playing with dynamic loading for a while, but it’s only more recently that it’s started to take shape as a stable feature.

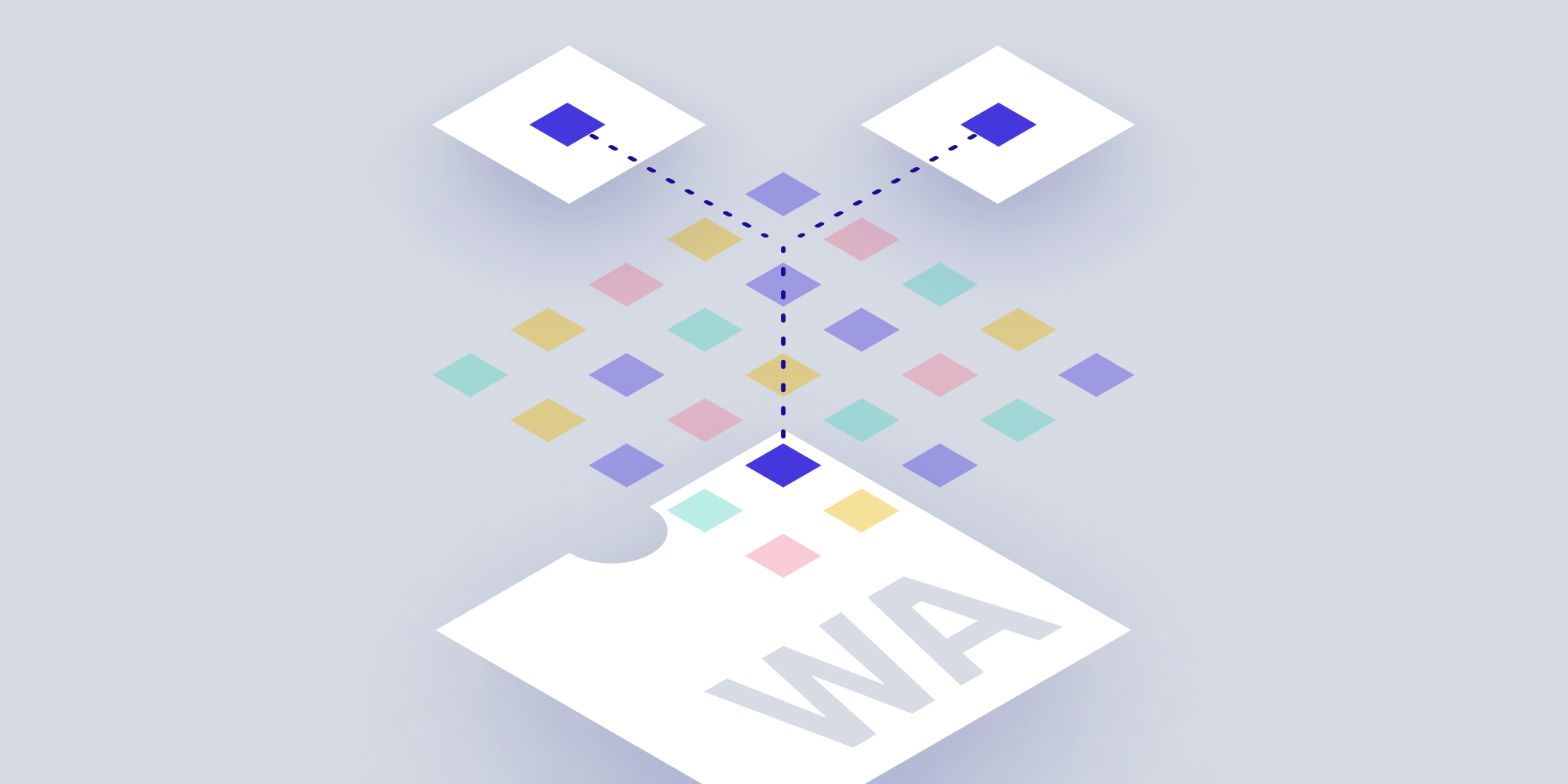

In Emscripten, dynamic linking consists of one main module and one or more side modules, all of which are WebAssembly binaries. The main module holds all the symbols required from the common libraries, along with any other project symbols you choose to compile. The side modules hold any other code you desire, and importantly, cannot operate without being loaded in conjunction with a main module.

The relationship between the main and side modules is very much like the relationship between an executable binary and shared libraries in the Unix world, i.e. shared libraries aren’t operational without instructions from the executable.

Creating the modules only requires a few extra options on the command line:

emcc b.cpp -s SIDE_MODULE=1 -S EXPORT_ALL -o b.wasm emcc a.cpp b.wasm -s MAIN_MODULE=1 -o project.js

An important point to note is that symbols that aren’t resolved won’t cause any errors until they’re invoked. That can make it a little tricky to diagnose what’s missing, unless you’re running through all the code paths. To give an extra helping hand during development, you can build with the -g flag and -s ASSERTIONS=1 option, which will more accurately describe the missing symbols.

So, What’s the Advantage of Side Modules?

If you haven’t guessed it yet, side modules come into their own when multiple main modules rely on the same side module — meaning that a shared side module only has to be downloaded once, which reduces download overhead and speeds up overall startup.

Another more unique — and maybe even a little dangerous — advantage is if you know that the symbols in the side modules aren’t required ahead of time. For example, the code flow you’re following would never call the symbols in the side module. That way, you wouldn’t have to download and link the symbols at runtime, thus avoiding the distribution of code that isn’t required in a particular instance.

But do be aware: Not loading the side module only works because there’s no symbol checking upon instantiation. If, for some reason, that mechanism changes and missing symbols must be resolved upon instantiation, the flow will break. That’s why I consider it to be a little dangerous.

To achieve the above, make sure you turn off any warning on undefined symbols at compile time and remove the side module linking:

emcc b.cpp -s SIDE_MODULE=1 -S EXPORT_ALL -o b.wasm emcc a.cpp -s MAIN_MODULE=1 -s WARN_ON_UNDEFINED_SYMBOLS=0 -o project.js

Because ignoring symbol warnings at compile time is dangerous and could cost you considerable development time, I’d like to introduce a safer way. What if you could delay dynamic linking until runtime?

dlopen — Designing a Plugin System

There’s another option, and that’s programmatic access to the linker, which is basically calling dlopen.

You may have used dlopen in the past if you were creating some type of plugin system — maybe an extension to a current project that’s distinctly different to your main code, or even when offering a vector for customers and other developers to extend the functionality of your code.

The great news is that dlopen is supported in Emscripten. That means you can open a compiled side module with dlopen and use dlsym to resolve required symbols at runtime. You’ll never need to know what’s required ahead of time, and instead, you can programmatically decide what libraries are needed and when.

However, there are drawbacks to dlopen and Emscripten.

The first is that if you’ve ever used dlopen in the past, you’ll know it can be tricky to find C++ symbols at runtime. This is because C++ names are mangled at compile time, making it hard to retrospectively find symbols at runtime. That’s why you’ll commonly see interfaces described in C. C symbols names are, in most cases, not mangled, so myFunc can be found by searching for myFunc with dlsym, but that’s not everything. You’ll need to know the declaration of myFunc to call it correctly. That means you have to have a well-defined interface ahead of time, and declarations need to be concise — usually using simple types. As you can imagine, this is limiting and often requires a lot of type interpretation, which is error-prone.

The second issue is when to fetch (download) the extra side module for use on the web. In JavaScript? In C++? Before WebAssembly instantiation? Just before the dlopen?

These are all important questions because, depending on the requirement, the complexity of the solution can vary wildly.

If you know your module requirements up front, the solution is less complex. That’s because you can download both your main module and side module ahead of time, and you can load the side module into the WebAssembly virtual file system after instantiation.

Fetching Side Modules Asynchronously

You’re maybe wondering what makes it so difficult to download the side module only when you need it. That would be the logical solution, because you’ll be able to instantiate the main module earlier and run any necessary code prior to the requirement of the side module, speeding up startup. The issue comes about when you’re trying to download the side module. When does that occur? If you can make that decision in JavaScript, that’s best, because you can utilize the web Fetch API and await in JavaScript. But if you want to complete the fetch in C++, you’ll need some type of asynchronous support.

For fetching in C++, you have many options:

-

Async inline JavaScript in C++ (calling

fetchin a JavaScript block) — nested in C++ code -

Async inline JavaScript in C++ (calling

fetchin a JavaScript block) — at the top level with a small stack -

Some JavaScript <-> C++ dance instructing JavaScript to download the binary and run the applicable C++ — I won’t discuss this option because it requires a heavy interface design

Emscripten Fetch API

Using Emscripten’s Fetch API seems like the prime candidate upon first glance, but again, it depends on your current project setup.

To use the Fetch API, you need to implement some type of asynchronicity. This can be in the form of callbacks, or by using the synchronous API provided. If you can afford to delay loading and write all the library opening and calling code in a callback, using the Fetch API with callbacks is ideal. Sadly, with many C++ projects, asynchronicity and callbacks aren’t how projects have been designed, so that may be off the books.

You have to look to the synchronous API, which requires the use of the --proxy-to-worker flag. This doesn’t work if you’re using the MODULARIZE flag, or if you’re using -s USE_PTHREADS=1, which uses POSIX Threads (pthreads), but cannot run on the main thread. And using pthreads adds a large overhead to the project, so if you’re not already utilizing them, it’s most likely not an option.

Async Inline JavaScript in C++ — Nested in C++ Code

The next option is to write the JavaScript-fetching code inline and use the asyncify feature to handle awaiting the JavaScript in C++. That works great, because you can call your inline JavaScript from anywhere in your C++ project and retrieve the library binary synchronously. But again, this adds significant bloat to the binary, especially if you have a large complicated project:

EM_ASYNC_JS(bool, fetchAsset, (const char* url, const char* savePath), {

const response = await fetch(UTF8ToString(url), {

credentials: 'same-origin',

});

if (!response.ok) {

return false;

}

const data = await response.arrayBuffer();

try {

FS.writeFile(UTF8ToString(savePath), new Uint8Array(data));

return true;

} catch (e) {

return false;

}

});Async Inline JavaScript in C++ — At the Top Level with a Small Stack

The last option is utilizing the exact same method as above, but using the inline JavaScript in very specific places. That’s because the only reason the code becomes bloated in the example above is because analysis is required to determine which methods may be on the stack when calling the EM_ASYNC_JS function. This is done to wrap those functions with special async/await support. If any of the methods are indirect — for example, a virtual member function — then the analysis has no idea what will be called, and all code must be wrapped with the async/await code, causing bloat.

To avoid this bloat, you can avoid calling EM_ASYNC_JS from indirect places and use the ASYNCIFY_IGNORE_INDIRECT flag to only analyze direct calls, reducing code footprint and bloat. But you have to be very certain where the inline JavaScript will be called from, which is why I’d advise calling it from the top-level API exposed to the web, meaning the stack would be very minimal and understandable.

Conclusion

As you can see, dynamic linking sounds like a no-brainer at first glance, but there are many tradeoffs for many of the implementations. It’s up to you to decide if any of the dynamic linking models fit your project requirements, and then determine if the approach is maintainable.

My advice would be to explore other optimization avenues before hunting down dynamic linking options, as many of the various optimizations levels and link time optimization can have far greater effect on binary size.

When Nick started tinkering with guitar effects pedals, he didn’t realize it’d take him all the way to a career in software. He has worked on products that communicate with space, blast Metallica to packed stadiums, and enable millions to use documents through PSPDFKit, but in his personal life, he enjoys the simplicity of running in the mountains.